In my previous article on running Spring Batch application in Cloud Foundry, we looked at how a Spring Batch application can be executed as a one-off task in PCF. We created a Spring boot batch application annotated as a Task and deployed it on PCF like any other cloud native application. Then the task was executed using the PCF CLI commands. This approach works for small number of batch applications that are executed without much inter-dependencies. However, with the microservices architecture, we’ll usually end with lot many small tasks that are executed either sequentially or in parallel to ingest and process the data before it’s written out to the sink.

In this post, we’ll look at a very simple example of how to leverage Spring Cloud Data Flow for running a batch application in PCF. Spring Cloud Data Flow can be used to create data pipelines by orchestrating Streams and Tasks that can be deployed on multiple runtimes like Cloud Foundry, YARN, mesos etc.

Spring Cloud Data Flow Server

It is assumed that you already have PCF Dev running locally. If not, you can follow the instructions here to run the PCF locally on a VM.

Get the latest deployment artifact for Spring Cloud Data Flow Server from spring repository and create a manifest file with following properties.

--- applications: - name: data-flow-server memory: 1g disk_quota: 2g path: spring-cloud-dataflow-server-cloudfoundry-1.2.4.RELEASE.jar buildpack: java_buildpack services: - df-mysql env: SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_URL: https://api.local.pcfdev.io SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_ORG: pcfdev-org SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_SPACE: pcfdev-space SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_DOMAIN: local.pcfdev.io SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_USERNAME: admin SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_PASSWORD: admin SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_SKIP_SSL_VALIDATION: true MAVEN_REMOTE_REPOSITORIES_REPO1_URL: https://repo.spring.io/libs-snapshot SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_TASK_SERVICES: df-mysql SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_TASK_MEMORY: 512 SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_TASK_DISK: 512 SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_TASK_INSTANCES: 1 SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_TASK_BUILDPACK: java_buildpack SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_TASK_ENABLE_RANDOM_APP_NAME_PREFIX: false SPRING_CLOUD_DEPLOYER_CLOUDFOUNDRY_TASK_API_TIMEOUT: 360 SPRING_CLOUD_DATAFLOW_FEATURES_EXPERIMENTAL_TASKSENABLED: true

Before deploying the Spring Cloud Data Flow Server on PCF using cf push command, create a mySQL service using the command below.

cf create-service p-mysql 512mb dfs-mysql

Navigate to the Spring Cloud Data Flow server dashboard to make sure that the application is deployed successfully.

http://data-flow-server.local.pcfdev.io/dashboard/index.html#/apps/apps

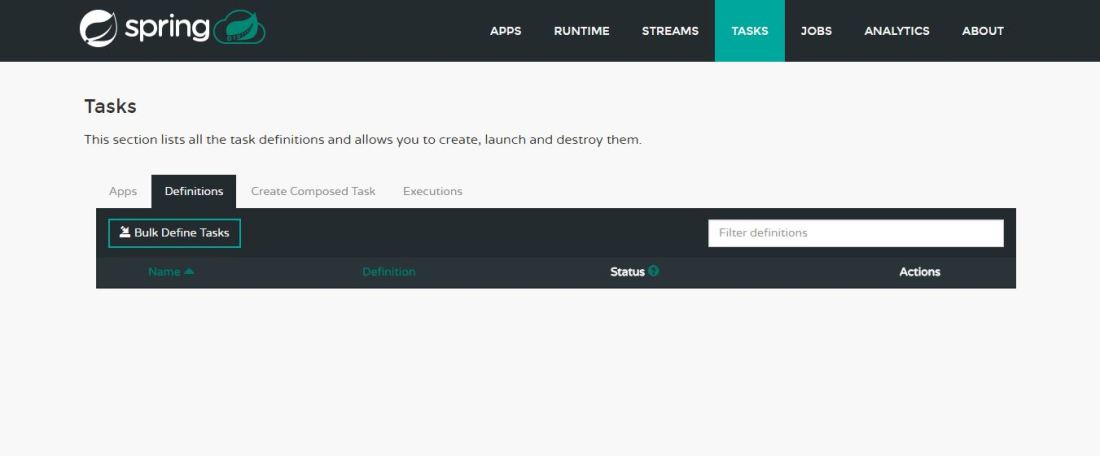

At this point there won’t be any apps and tasks registered with data flow server.

Spring Cloud Data Flow Shell & App Registration

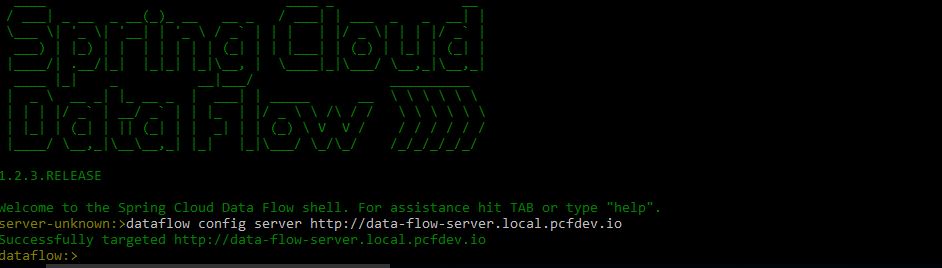

Get the latest Spring Cloud Data Flow shell application from the spring repository and run the jar to start the shell application.

java -jar spring-cloud-dataflow-shell-1.2.3.RELEASE.jar

Configure the data flow server by running the following command in the shell

dataflow config server http://data-flow-server.local.pcfdev.io

Next step is to register the payment processing batch application as a task with the data flow server. This is the same batch application that we deployed on the PCF as a task in my previous post.

We need to provide a path to the jar file for the batch application that needs to be executed as a task. Spring Cloud Data Flow server would get the jar from the specified URI before executing the task. Here we are fetching the jar file from AWS S3 bucket, however in production environment it would typically be fetched from a software repository like Nexus etc.

app register –name payment-processing-batch –type task –uri https://s3.us-east-2.amazonaws.com/aman-dataflowtest/payment-processing-batch-0.0.1-SNAPSHOT.jar

Next step is to create a task by executing the following command in the shell

task create payment-processing-batch-task –definition payment-processing-batch

We can view the list of tasks created by executing the following command

task list

Finally, launch the task by running the following command

task launch payment-processing-batch-task

We can now see the application and tasks registered with the Spring Cloud Data Flow Server.

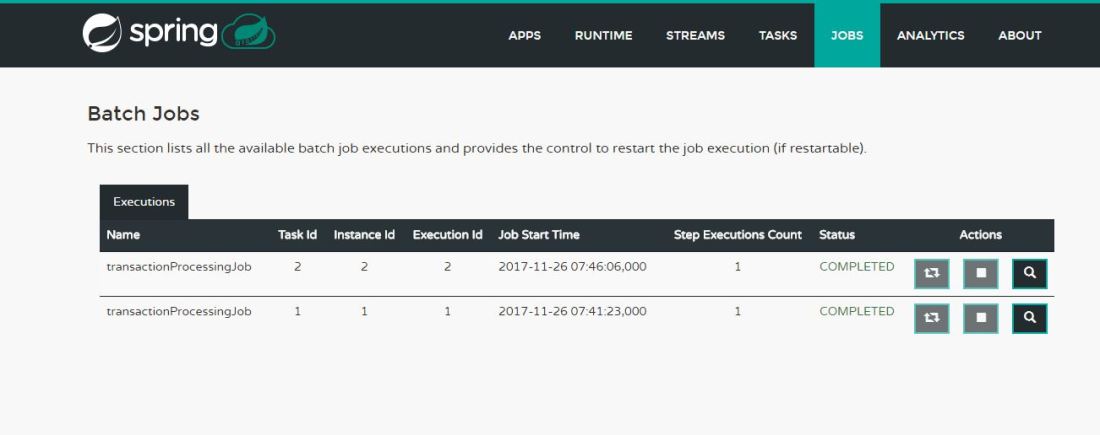

The dashboard also shows the Batch Job status for all the available task executions.